What is Codesign, Really?

When Leanlab was founded in 2013, the term codesign wasn’t in our vocabulary. But the idea was already there.

I had identified two core issues in public education; education was lacking creativity and innovation at a time when technological progress was accelerating. Meanwhile, power dynamics within public education were lopsided, resulting in families, students, and teachers losing trust in the institutions and public education systems that served them.

At the time, I worked at a charter school up the hill from the creative hub of Kansas City—a coffeeshop for the avant-garde across the street from the city’s arts incubator program. I had close friends in this creative community, where collaboration and art collectives were a constant. Much of the time, no one person “owned” a project; rather, work evolved through interplay, iteration, and shared authorship. It echoed the community organizing principles my parents had exposed us to growing up, a shared central theme of questioning and repositioning power dynamics.

Could this ethos be applied to solving the entrenched problems in public education? Could it subvert entrenched power dynamics? What if we approached education innovation as creative collaborations with communities?

These questions laid the foundation for Leanlab Education. We started by listening—conducting community research with teachers, superintendents, students, parents, and local leaders across Kansas City. We gathered and analyzed data from all zip codes and demographics on what community members saw as the most urgent challenges and possibilities for innovation.1 Then, we connected those community-defined priorities to early-stage education entrepreneurs—offering funding, coaching, and real-time feedback from the people their products intended to serve.

At the time, we called it community-driven innovation. Today, we call it codesign, a nod toward an attempt at right-sizing power dynamics within the design process.

However, this early approach had its limitations.

I misspoke; really states and districts were responding to top-down testing incentives initiated by Bush’s 2001 No Child Left Behind Act, and accelerated by Obama’s Race To The Top initiative from 2008-2015.

We quickly ran into the structural misalignments of the edtech market. Available investment dollars that would allow founders to quit their day jobs (most were educators and parents) were non-existent. Instead, investment dollars flowed to connected founders building tech products. Speed to market was prioritized over making easy-to-use products that measurably impacted student learning. Products were built to woo the administrators who make purchasing decisions, not for the classroom. The dominant assumption was that codesign was inefficient. In reality, it was just undervalued.

We saw well-resourced ideas fail because they hadn’t been tested with the people they were supposed to help. We saw bottom-up solutions flounder because they failed to capture commercial interests. And we saw how power, capital, and influence continued to shape which tools gained traction—regardless of whether they worked for students and teachers.

So we pivoted.

Leanlab’s Evolution

Leanlab transitioned from a community-driven accelerator to a full-scale research and development lab. Our aim was to build a model of codesign that stretched from idea to implementation—with educators, researchers, and product developers working together throughout the process.

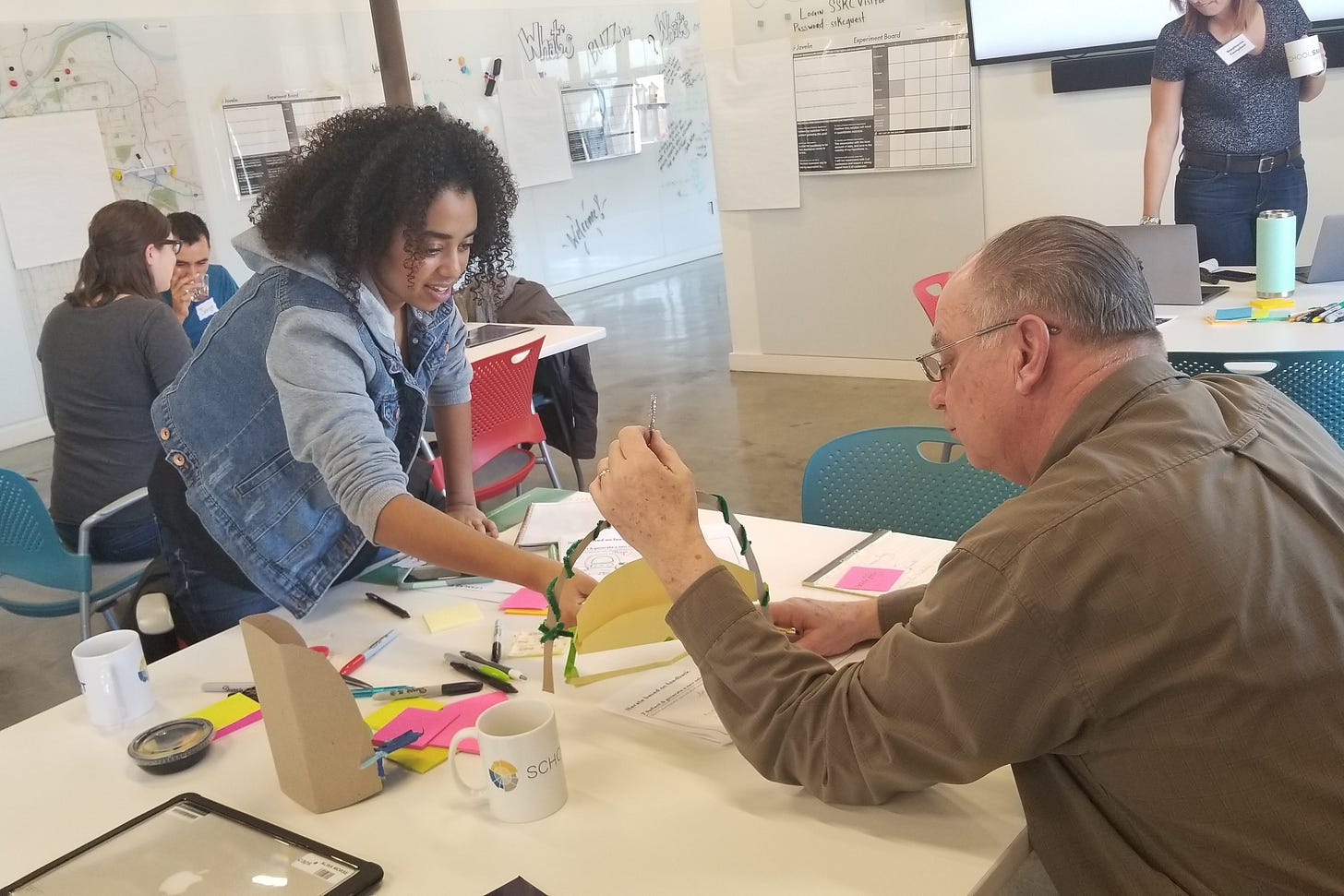

At first, this meant bringing early-stage edtech companies into our incubator and embedding them in school communities over the course of a school year. Educators and founders sat together with Leanlab’s research team and codesigned research questions from scratch. We called the educators “school champions.” Our researchers facilitated, and everyone had a seat at the table.

But most of these incubator products were still in the early proof-of-concept phase. The educators we partnered with were deeply invested and wanted to see results that mattered. What we found, though, was that these early-stage pilots often produced over-designed studies with small sample sizes. The data wasn’t strong enough to draw meaningful conclusions about impact. We needed to adjust our methods.

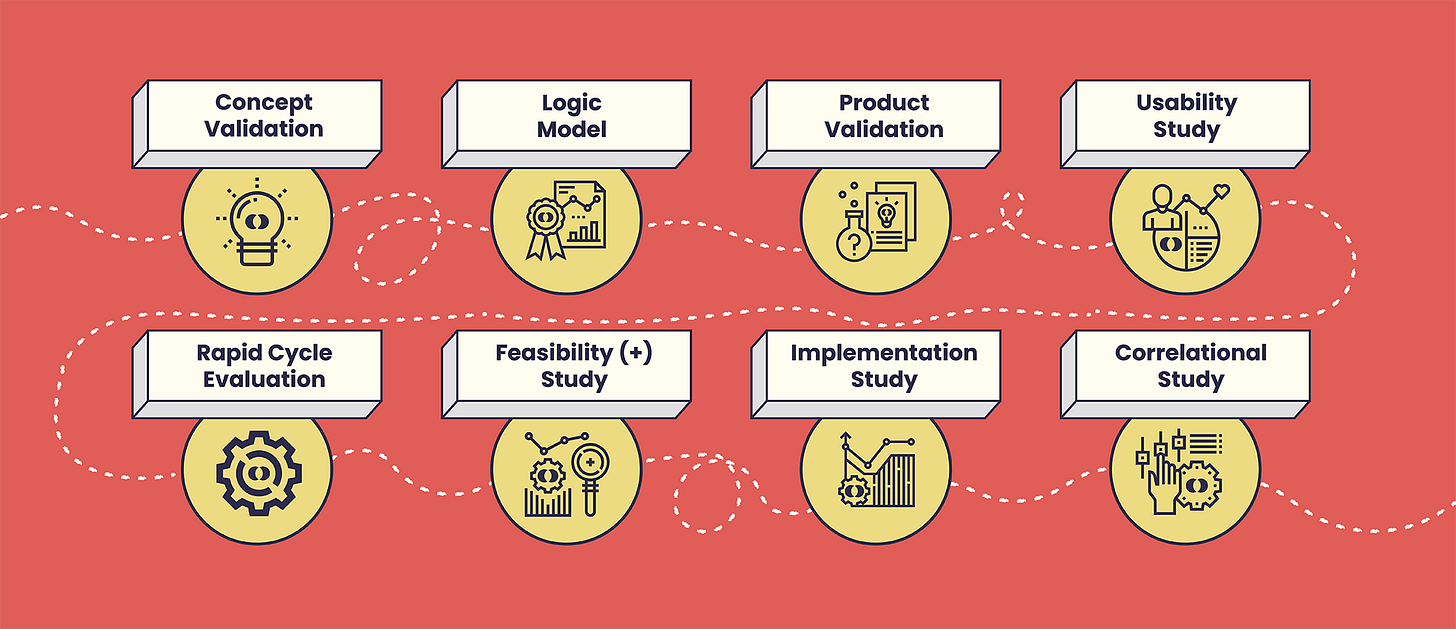

That’s when we formalized what we now call Leanlab’s Codesign Product Research Journey—a staged approach to research that aligns study design with product maturity, and keeps educators involved from start to finish. At the close of each study, regardless of design or scope, companies receive an “action plan” with specific recommendations for product improvements, while educators’ impact is affirmed through a shared final report.

Here’s how it works:

Concept and Product Validation

At the earliest stage of research—when an idea is still conceptual, or a product is an early prototype—we leverage our educator networks, including the Codesign Collective and AGILE (American Group of Innovative Learning Environments), to test whether a product concept addresses real needs. We ask: Is this solving the right problem? How would it add value? What would have to change for educators to adopt it or pay for it?

During concept and product validation, the research participants are critical advisors, helping entrepreneurs discern if the innovation is worth pursuing.

Usability Studies

Once there’s a prototype or minimum viable product (MVP), we begin usability research. School communities provide ongoing feedback from their trialing as developers iterate the product to improve technical bugs, navigation, features, and more. These studies are deeply action-oriented. Every report’s action plan offers specific recommendations, and we award a codesign badge to products that act on the majority of educator feedback within a defined timeframe. Our goal isn’t just insight—it’s change.

During usability studies, participants embody the essence of the word co-designer, providing critical insights that shape the product’s development in a measurable way.

Feasibility Studies and Rapid Cycle Evaluation

At this stage, we study whether the product can be used reliably in real classrooms. Educators give input not just on the product, but also on the implementation conditions: what training is needed, what barriers to use exist, and where the tool fits in real instructional time. At this point, we’re engaging at the district and school administrative level, ensuring we are in alignment to district data sharing protocols and procedures, and that we are aligning to district-stated priorities. We may use pre and post-tests to look for early evidence that the product is having an intended effect.

For companies eager to codesign quickly, we employ rapid cycle evaluation methodology, collecting feedback and making product improvements over multiple cycles—particularly useful for addressing early bugs. Rapid cycle evaluations have been one of our most popular codesign offerings in recent years, as they work well for companies building AI-powered learning solutions.

“Thank you for putting the student’s names into their decodable readers. They said they felt famous and what they wrote to you has meaning!” - Educator in LitLab.ai rapid cycle feasibility study

During feasibility and rapid cycle evaluation stages, participants are providing feedback on how both product and implementation need to improve to get to a point where it can be reliably and sustainability used in the classroom.

Implementation and Correlational Studies

Now we look at whether the product can scale. We pair implementation studies with correlational analyses to explore possible links between product use and student outcomes. Here, codesign shifts: educators help define the research question and shape the data collection strategy, ensuring the research reflects their context and community’s needs.

Quasi-Experimental Studies

In later phases, we move toward designs with greater causal inference. Educators codesign implementation plans that allow us to build treatment and control comparisons—without disrupting instruction. The codesign here isn’t about product tweaks, it’s about equitable, feasible research design and improving learning outcomes.

Through this model, codesign evolves. It starts with educators as co-creators of product, then transitions to co-creators of research design, and—crucially—as co-investigators of learning outcomes.

But we’re not done.

Democratizing Research

The next frontier for codesign is democratizing research itself.

What would it take for educators to drive their own inquiries—without waiting on an external research partner, product team, or funding? How might they design their own instruments, launch their own studies, and analyze their own data? How might they lead—not just contribute to—education R&D?

Technologies like artificial intelligence are beginning to make this imaginable. As generative tools become more accessible and trustworthy, we can envision a future where educators write their own code, create their own products, and run their own experiments—all grounded in the realities of their classrooms. At Leanlab, we’re working to equip educators with the research knowledge and codesign experience to thrive in this future.

This is the vision: a distributed R&D ecosystem led by those closest to the work. Educators as co-researchers. Co-creators. Co-authors.

That’s what codesign was always meant to be.

For years, we published annual Listening Tour papers summarizing findings. We experimented with different ways to subvert power dynamics here. We coded survey participant data based on positionality, giving greater weight to those farthest from power and opportunity (a parent in an under resourced zip code received a larger point value for their perspective than a Superintendent, for instance). The goal was to prioritize innovation opportunities and ideas that were coming from the ground up.

We continued this experimentation with power dynamics through participatory grant making practices—at one point we had a panel of community representatives, again ranked by power (philanthropist, for profit investor, school system leaders, teachers, parents, students) who regularly assessed the solutions in our incubator, ultimately distributing grant money aligned to their assessments. Interestingly, we found that group think was palpable regardless of weighted formulas; people of all backgrounds tended to “agree” with the perspectives and ideas of those with the most power in the group, i.e. the thoughts of philanthropists and investors tended to dominate. But that’s a story for another time…